Multimodal ChatGPT: Speak, Hear, See

Hey — It’s Hussein 👋

Last week’s TC Disrupt 2023 event in San Francisco was the best one yet! It was filled with enthusiastic energy from 13,000 startup founders that was truly contagious.

Of course, my highlight was the packed house of founders listening to my panel on bootstrapping a business, followed by a long Q&A session. It is always fun chatting with founders and investors about startups (watch, read).

TechCrunch Disrupt 2023 Panel

There was a lot of interest in the topic of bootstrapping a business. I am considering writing an article for GPT Hacks on it (let me know what you think). For a full recap of the event, check this post.

For now, let’s get on to today’s big news: ChatGPT is now multimodal. It can see, speak, and hear!

Let’s look at what you need to know about these new features, have a quick chat about what Amazon and Apple are up to, and go through a list of business use cases that I’ve been thinking about since the news came out. ✨

Before we begin… a big thank you to:

Great tool for B2B sales, packed with AI-powered features

Try Reply - an all-in-one AI sales tool

Build prospect lists, lookup emails, automate engagement

Summary of ChatGPT updates:

Speak & hear

Very soon, you’ll be able to talk to ChatGPT and get a verbal response. If you’ve been using the mobile app version of ChatGPT, the ability to dictate has been there from the start. This takes it further and introduces the ability to converse with the AI.

This is starting to feel like we will all have our own J.A.R.V.I.S., just like Iron Man!

ChatGPT becoming J.A.R.V.I.S.?

I am curious to see where this goes from here. Some thoughts and updates on the personal assistant front:

I predict that Microsoft will release a Cortana speaker powered by ChatGPT to rival the Apple Homepod and Amazon Alexa.

Apple has been very quiet on updates to Siri. But there are rumors that they are working on their own LLM. Maybe a smarter Siri is coming soon!

Amazon just announced up to a $4B investment in Anthropic, the makers of Claude. Are we going to see an Alexa powered by the Claude model soon? I say yes.

See

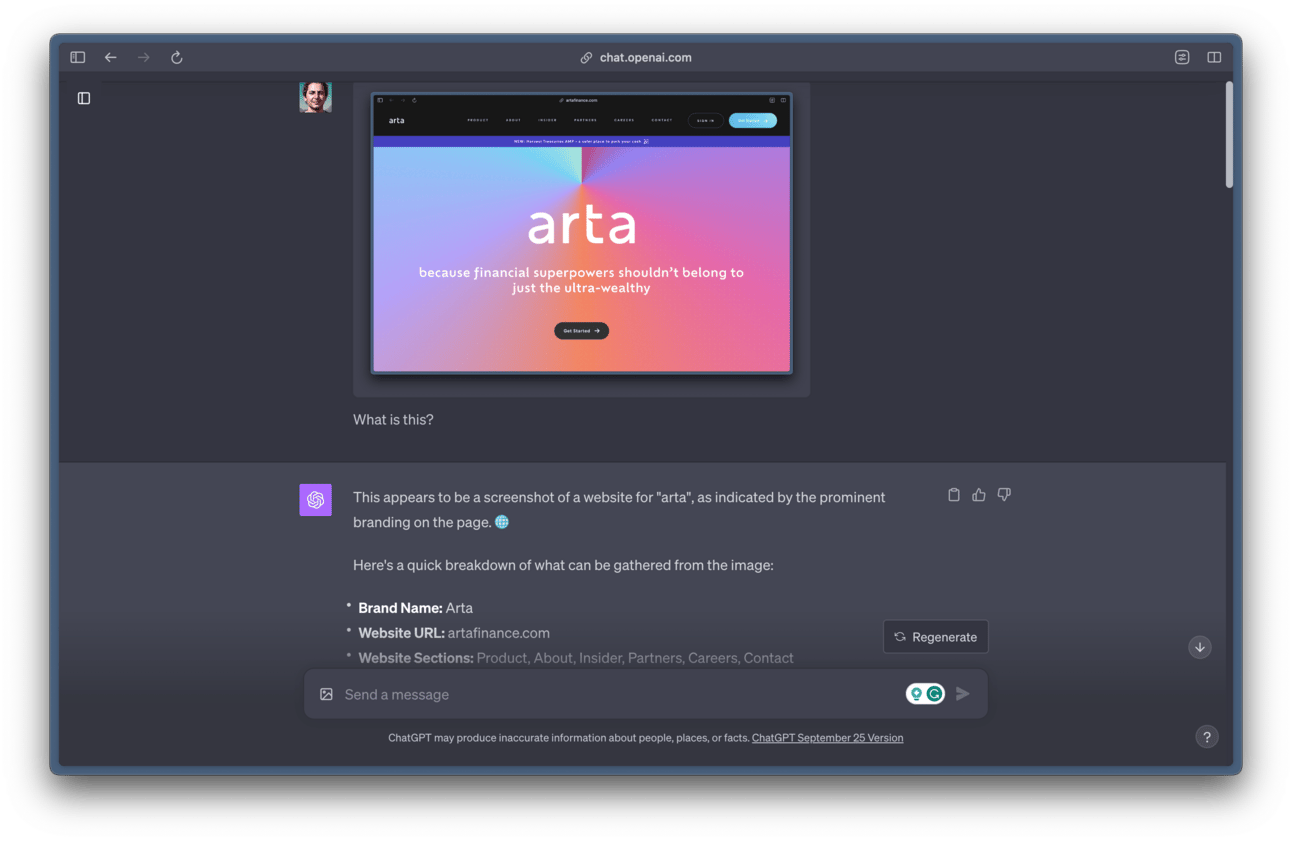

This is truly mindblowing. You can upload an image to ChatGPT and it will not only recognize the image but know the details of what is in the image. It will then converse with you regarding the image.

I took this feature for a spin and uploaded a screenshot of Arta’s landing page. It recognized that it was a screenshot, gave a breakdown of the name, URL, call to action, website sections, and tagline and it even recognized that I worked there because of my custom instructions. 🤯

ChatGPT analyzing a website screenshot

I was then able to ask it for recommendations on how to improve the landing page and even asked it for code, which it provided. It's really remarkable that all of that worked with very simple prompts. I can’t wait to spend more time with this and uncover its true potential.

When are the features being released?

These features will be made available to users in batches over the next two weeks. I got access this morning and started experimenting with them.

Speak and hear capabilities are coming to the mobile apps only for now. While the seeing capabilities will be coming to the web and mobile.

🤑 Note that you need a ChatGPT Plus subscription to use these new features.

Bing browsing is back btw.

For those who remember, Bing browsing which gave ChatGPT a way to pull real-time information from the web, was disabled by OpenAI due to concerns from user abuse.

This week, the feature was turned back on and is now available again.

I was planning on writing an article on Bing Browsing being back in ChatGPT and how to use it but that got put on hold to focus on the new multimodal capabilities. I’ll come back to this topic in a couple of weeks.

ChatGPT multimodal business use cases

🤯 The possibilities and business use cases for ChatGPT vision and vocals are endless. The more I think about this, the more ideas I have, I can’t wait to see what everyone builds with these new capabilities.

Here’s a list of 10 business use cases to get you started:

1. Your client uploads loan paperwork. Your AI loan processor analyses the content, reviews it for fraud, compares it to the client’s records and contract, goes through your SOPs, and prepares it for a loan officer to give final approval. That last step can be automated with time too… An AI-drive back office for a lender just became a reality.

2. A shopper visits your e-commerce site and uploads an image. Your AI personal shopper looks at the image, reviews the shopper’s purchase history and preferences, looks at your inventory, and creates a shopping list for the buyer to browse.

3. A customer calls your support line. Your AI service rep answers with 0 hold time. The customer asks a question in ANY language! The AI reviews the customer’s records, their previous calls, messages, tweets (posts?), list of issues, etc., and your SOPs in less than a second and answers the question in the caller’s language. The AI can have a full conversation with the customer and be given guidelines on exceptions, escalations, discounts, etc.

4. Your field agent is on a repair site, takes a picture of the issue to be fixed, and uploads it. Your AI field ops manager reviews training manuals and repair guides and gives your field agent an overview of the problem and step-by-step instructions on how to fix the problem.

5. Your radiologist takes an x-ray, and your AI radiologist takes a first look and sends the x-rays with comments and highlights preliminary findings for the doctor to review further.

6. You take a picture of your retail shelves, and your AI shopper reviews it for stock levels (and gets an order ready), compliance (and gives you a warning if you are breaching contracts), and layout recommendations (to max profits based on projections).

7. Social listening tools that monitor text in social media for your brands can now also review images and voice on TikTok, Insta, Twitter (x), etc., and give you a sentiment on your latest release.

8. Chat with my PDF apps have been around for a while now, but with these new features, they will be much more accurate and reliable. Making the use cases of reviewing legal documents and summarizing them much more attainable.

9. Restaurant goers coming into your joint can chat with your online menu and provide dietary restrictions, preferences, and cravings, and ask questions then the AI menu can give recommendations based on their wants, and the restaurants push on certain menu items. Finding a balance for both.

10. Candidates submit their resume for your job posting. Your AI recruiter reviews the resume and screens without needing to rely on keywords only. No more missing out on good candidates who didn’t use the specific keyword, or getting inundated with bad candidates who happened to used the right keyword.

These past few weeks have felt like major leaps in tech. I can’t remember the last time in tech history when we had so many leaps back to back. Truly remarkable.

Are you building with OpenAI APIs? I am curious what you are doing. Reply and let me know. I’ll do a recap of projects and startups being worked on by GPT Hacks readers.

See you next Wednesday — Hussein ✌️

P.S. If you’d like to sponsor, reply. 5.4k founders and entrepreneurs are waiting for you.

P.P.S If you live in or visit San Diego, sign up here to be notified of tech and founder mixers I organize in the area. Would love to meet you there.

Enjoy the newsletter? Please forward this to a friend. It only takes 10 seconds. Writing this one took over 10,000 seconds. (Want rewards? 🎁 Use your custom link).

New around here? Join the newsletter (it's free).